How to Avoid Devastating Mistakes in AI Analytics

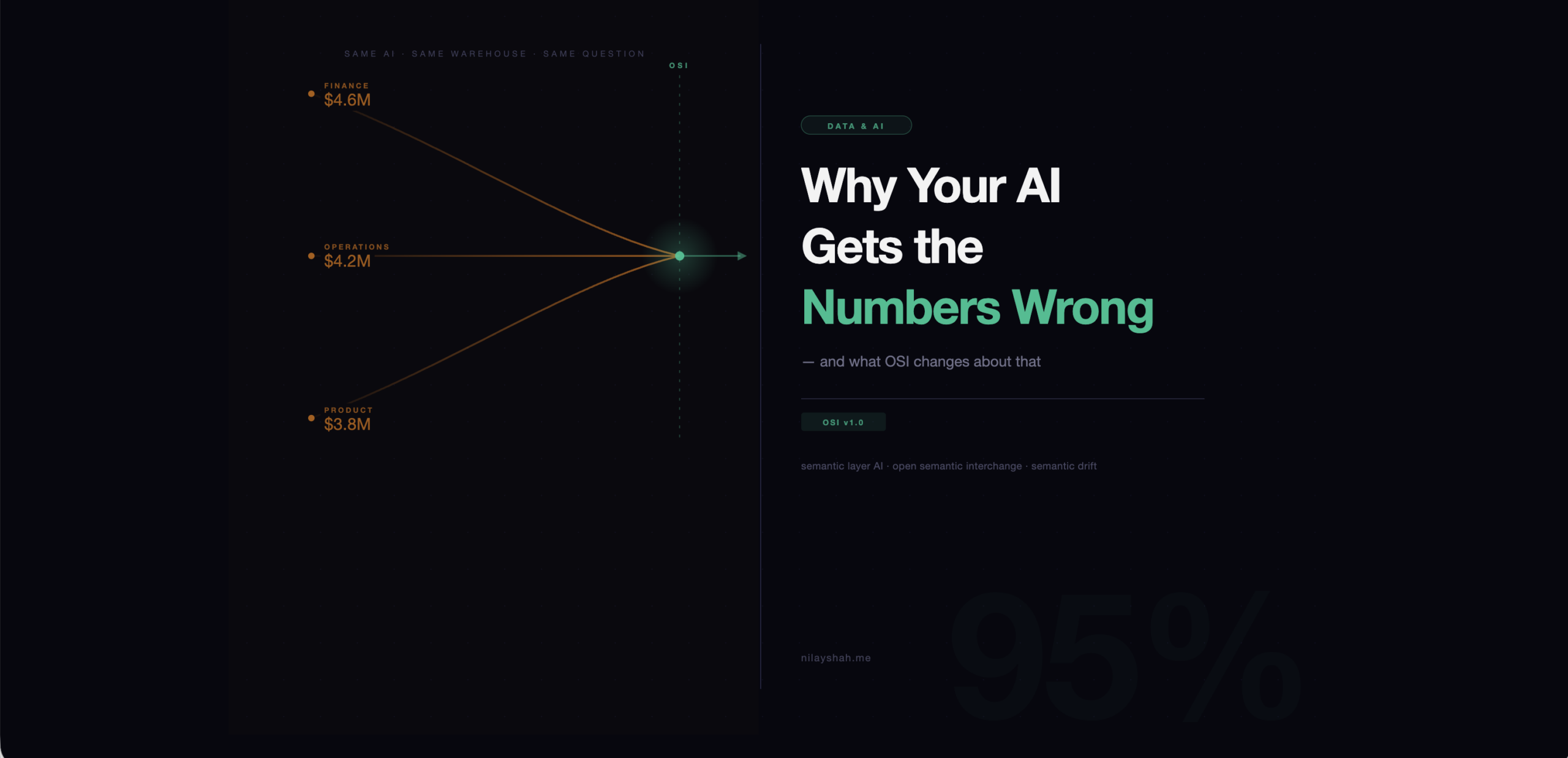

The quarterly business review was going well until someone asked the AI assistant for last month’s revenue. Finance got $4.2M. Operations got $3.8M. The product team got $4.6M. Three different answers, same AI, same warehouse, same question and each delivered with identical confidence. The failure was not a hallucination. It was a missing contract.

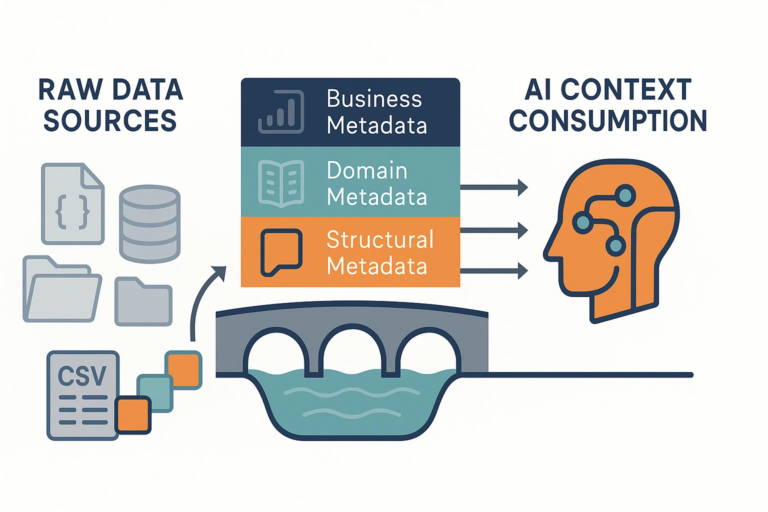

The model queried real data and ran real arithmetic. What it lacked was any instruction about which interpretation of “revenue” was authoritative: recognized versus booked, gross versus net, which date column to filter by, whether refunded orders stay in. That missing instruction is a semantic contract, the foundational layer that every semantic layer AI Analytics system depends on to produce reliable answers. The absence of a shared standard for encoding it across tools, platforms, and AI agents is precisely what the Open Semantic Interchange specification was built to fix.

Why Your AI Analytics Still Gets the Numbers Wrong and What OSI Changes About That

The popular explanation for AI Analytics data errors is hallucination, the model invented something that was not there. But the more consequential failure mode in enterprise AI analytics is quieter. The model computes something real using the wrong rules. Common old rule, Garbage in – Garbage out.

It queries the right table with the wrong filter. It aggregates the right column against the wrong date range. It includes trial accounts in a metric that should only count paid customers. The answer arrives plausible, precisely formatted, and wrong. And because it comes with a dollar sign or a percentage or a neatly labeled row, it gets treated as a fact.

Research from dbt Labs illustrates the scale of this gap. When frontier LLMs queried raw data warehouse schemas without semantic context, accuracy on enterprise queries fell below 20%. The same models queried through a governed semantic layer (What is the semantic layer) exceeded 95%. The model had not changed. The context had.

The reason the gap is so large is structural. Academic text-to-SQL benchmarks like Spider use clean, normalized schemas with unambiguous column names. GPT-4o scores around 86% on Spider. Real enterprise schemas look very different: columns named amt, val_1, rev_adj; fifteen tables with overlapping date fields; a “customer” definition that evolved three times since the original schema was built. On real enterprise data, that 86% routinely collapses to 10–20%. Models cannot infer business intent from schema structure. They guess. In production, wrong guesses are invisible until someone audits the output.

Semantic Layer AI Analytics Drift Is the Actual Bug

The problem has a name in the data engineering community: semantic drift. It describes what happens when different teams, tools, and systems independently develop their own interpretations of the same business concept.

Marketing defines “active user” as anyone who logged in over the past 90 days. Product defines it as anyone who completed a core action in the past 30 days. Finance excludes free-tier users entirely. Each definition exists somewhere as a Confluence page, a dbt model comment, an institutional memory held by the analyst who wrote the first query. When AI Analytics systems are pointed at data directly, none of that documented intent transfers. The model sees a table and makes an inference.

Salesforce researchers studying this pattern found that organizational trust in AI analytics erodes quickly once users discover inconsistent results. The practical fallout: data teams that skip semantic governance and jump directly to AI integration report spending roughly three times as long debugging outputs as building new capabilities. The bugs are hard to reproduce, hard to explain to non-technical stakeholders, and immune to model-layer patches. You cannot fix a semantic ambiguity by upgrading the LLM.

What OSI Actually Defines

On January 27, 2026, a working group that included Snowflake, dbt Labs, Salesforce, Databricks, ThoughtSpot, Cube, Informatica, Collibra, and more than twenty other organizations published version 1.0 of the Open Semantic Interchange specification under an Apache 2.0 license. It is a YAML-based, vendor-neutral format for encoding semantic layer constructs in a way that any tool, platform, or AI agent can interpret consistently.

The spec defines five core object types. Datasets represent logical business entities, the fact and dimension tables that underlie your reporting, with explicit source mappings, primary keys, and field definitions. Metrics are the actual formulas: the canonical calculations for revenue, churn, conversion, and every other number your organization tracks. Dimensions are the categorical attributes used to slice those metrics region, product line, cohort, time. Relationships encode how entities connect. And contexts carry the higher-level intent: what this model covers, how it should be used, what edge cases and caveats apply.

What makes OSI technically distinct from earlier standardization attempts is the ai_context field, a first-class component of the specification designed explicitly for AI consumers. Previous semantic standards were built for BI tools: they told a visualization layer how to join tables and format numbers. OSI introduces a dedicated block where model authors provide natural language guidance for any AI system consuming the spec. In practice, an ai_context entry might read: “This model covers retail analytics. For revenue calculations, use the recognized_revenue metric, which applies refund exclusion and VAT adjustment logic. Do not use the order_total field directly.” That instruction travels with the semantic model versioned alongside the business logic it describes, not stored as a separate prompt or documentation page that will eventually fall out of sync.

This design resolves a fragmentation problem that quietly undermines most enterprise AI deployments. The data team maintains a semantic layer. The AI team maintains a separate knowledge base describing the same concepts in different terms. Over time, the two diverge. OSI collapses them into a single artifact. Business logic definition and AI instruction are exchanged as a unit.

The spec also treats governance as a first-class attribute. Access policies, row-level security rules, and metric-level restrictions are embedded in the semantic model rather than enforced only at query time. When an AI agent receives an OSI-compliant model, it also receives the governance envelope within which it is permitted to operate. This prevents a failure mode that has surfaced in early agentic deployments: agents that have data access but generate queries that violate governance rules because those rules were never placed where an agent could read them.

The ai_context Bridge to Agentic AI Analytics

The relevance of OSI extends well beyond fixing inconsistent dashboards. It is designed for a world where AI agents query data autonomously without a human reviewing each query before it executes.

Agentic AI analytics describes systems where AI agents compose multi-step analyses, route questions to appropriate data sources, and surface conclusions without step-by-step human guidance. An agent that can choose which table to query, which metric to apply, and which filter to use is also an agent that can choose wrong. In financial reporting, supply chain planning, or customer risk modeling, those choices are not merely inconvenient.

ThoughtSpot’s work on agentic semantic layers identifies the binding constraint precisely: an agent is only as trustworthy as the context it operates within. An agent given raw schema access will make plausible but unreliable decisions. An agent operating within an OSI-compliant semantic contract with ai_context guidance, governance constraints, and explicit metric definitions can make decisions that are both plausible and auditable. The semantic model becomes the agent’s operating envelope.

The practical consequence is that data engineering work and AI agent design work are converging, not running in parallel. The quality of the semantic layer determines the ceiling on agentic reliability. Teams investing in semantic modeling today are not doing pre-AI infrastructure work. They are building the foundation that makes agentic AI viable at production scale.

OSI v1.0 launched with support from more than thirty organizations across the data platform ecosystem. Phase 2, running through the end of 2026, targets native support in fifty-plus platforms alongside domain-specific extensions for regulated verticals including finance and healthcare. The 2027 roadmap targets de facto standard status a shared marketplace for semantic model templates that organizations can adopt and adapt. The direction is clear even if the timeline remains ambitious.

The Contrarian Take

Here is the version of this story the press release omits. OSI standardizes the exchange of semantic models. It says nothing about whether those models are correct.

A well-adopted OSI ecosystem means that your poorly-defined revenue metric will now propagate consistently to every AI agent, BI tool, and downstream consumer that reads your semantic model. It will be wrong everywhere, uniformly and efficiently. Standardization without quality governance does not solve semantic drift it industrializes it.

The gap OSI does not close is the hard work of actually agreeing on what metrics mean. That requires cross-functional alignment between finance, product, engineering, and data. It requires someone to be accountable for the semantic model the way a product manager is accountable for a product. It requires treating the metric catalog as a first-class engineering asset versioned, reviewed, and actively maintained not a documentation artifact that someone updated once and forgot. OSI gives you the container. Filling it correctly is still entirely your problem.

Organizations that adopt OSI as a technical shortcut and skip the governance work will find that their AI Analytics systems are now more consistent in the wrong direction. Consistency is a multiplier. It amplifies whatever is in the semantic model, accurate or not.

Tool Worth Attention: dbt Semantic Layer

The dbt Semantic Layer built on the MetricFlow framework is the most mature implementation of the patterns OSI formalizes. It lets data teams define metrics, dimensions, and entities directly in dbt models and expose those definitions to downstream consumers through a consistent metrics API. The MetricFlow framework contributed directly to the OSI spec’s YAML format; the dbt semantic model schema is the closest thing to a reference implementation the spec currently has.

What makes it worth attention specifically in the OSI context is the adoption pathway it creates. Organizations already using dbt for transformation are a short configuration step away from a governed semantic layer. That layer can be exported in OSI-compatible format and consumed by any platform that reads the spec. For teams building toward agentic architectures, it is the most direct path from “we have a data warehouse” to “our AI agents have a trusted semantic contract.”

Cube, Atlan, and ThoughtSpot all have their own semantic layer implementations moving toward OSI compliance. But dbt is where transformation logic already lives and the closer the semantic model is to the transformation layer where business rules are first written, the harder it is for the two to drift apart.

The version of AI Analytics that enterprise organizations are genuinely building toward agents that operate autonomously, query data on demand, and surface consequential decisions without step-by-step human review requires a level of semantic reliability that raw warehouse access will never provide. OSI creates the infrastructure for that reliability: a shared, versioned, portable contract between the people who define business logic and every system that consumes it.

But the spec is only useful if the definitions inside it are trustworthy. Before any exchange protocol matters, someone in your organization needs to answer a boring, important question: what exactly is a customer? What exactly is revenue? Write it down. Get finance and product and engineering to agree on it. Put it in the semantic model. Version it.

The standard is ready. The definitions are still your job.

Some Important Links

- Open Semantic Interchange OSI

- OSI GitHub Repository v1.0 Specification

- Snowflake: Open Semantic Interchange Initiative Announcement

- Snowflake: OSI Specification Finalized

- Snowflake: OSI Initiative Expands Partners

- dbt Labs: What the OSI Spec Means for Metrics, Semantics, and AI

- dbt Labs: How a Semantic Layer Prevents AI Hallucinations in Analytics

- dbt Labs: Semantic Layer vs. Text-to-SQL 2026 Benchmark

- Salesforce: Ending Semantic Drift Unified Business Logic Foundation

- ThoughtSpot: The Agentic Semantic Layer and OSI

- ThoughtSpot: What Is Open Semantic Interchange A 2026 Guide

- Promethium: Enterprise Text-to-SQL Accuracy Benchmarks

- Brooklyn Data: Where Are We With Semantic Layers (OSI Edition)

- VisionWrights: The Semantic Layer Why Your AI Gives Wrong Answers

- Contextual AI: Why Does Enterprise AI Hallucinate?