What Is the Semantic Layer? Enterprise AI Missing Link

Most AI systems deployed inside enterprises today are built directly on top of raw data. They point an LLM at a warehouse schema and hope for the best. The results are predictable metrics that don’t match the dashboard, SQL that joins the wrong tables, answers that contradict themselves from one session to the next. The systems that actually work reliably share a common architectural trait, they don’t expose raw data to AI at all. They route every query through a governed, semantically consistent abstraction layer(Semantic Layer). That layer has a name. The data industry has been building versions of it for over a decade. And almost nobody writing about how enterprise AI use-cases are successful while taking it seriously.

A decade of investing in clean data and then ignoring it for AI

To understand why this gap exists, Let’s take a look at what the data industry was doing between roughly 2012 and 2022.

Amazon Redshift launched in October 2012 and catalyzed what Tristan Handy, co-founder of dbt Labs, later called the birth of the modern data stack. Redshift, followed by Google BigQuery, Snowflake (2014), and Databricks, separated compute from storage, made columnar analytics economically accessible, and eliminated the on-premises capacity planning that had dominated enterprise data infrastructure for two decades. The Extract-Load-Transform (ELT) pattern replaced ETL. Data could land raw in the warehouse and be shaped in place, at scale, cheaply.

What followed was a decade of investment in making that raw data trustworthy. Fivetran standardized data ingestion from hundreds of SaaS sources. Stitch, Airbyte, and others followed. dbt founded in 2016 at Fishtown Analytics brought software engineering discipline to the transformation layer: version control, testing, documentation, modular SQL, and a lineage graph that showed exactly how each table was derived. The “Analytics Engineer” role emerged, occupying the space between data engineering and analysis, and dbt became its primary tool. By the early 2020s, dbt had over 60,000 teams building on it. The modern data stack had came together, ingest with Fivetran, transform with dbt, query with Snowflake or BigQuery, visualize with Looker or Tableau or PowerBI.

The semantic layer sat within this stack, sometimes explicitly, often implicitly. Looker introduced LookML around 2012–2013, embedding metric definitions, join paths, and access controls directly inside the BI tool. Google’s acquisition of Looker in 2020 validated the idea that business logic should live in code, not spreadsheets. Looker’s semantic layer was an early, BI-embedded example of a concept that was slowly going independent, the idea that metrics, dimensions, and relationships should be defined once, in a governed location, and consumed everywhere.

When GenAI arrived in force in 2023, enterprises made a natural-seeming but costly decision: they pointed LLMs directly at their modern data stack. Connect the AI to the warehouse. Let it write SQL. The decade of governance work, the clean transformations, the tested models, the agreed metric definitions were largely bypassed.

Cloud ELT era begins

BI-embedded semantics

SQL-as-code transforms

Modern stack consolidates

Headless semantic layer

LLMs hit raw schemas

Semantic grounding standard

Why LLMs fail on raw data lake schemas

The gap between demo performance and production reliability in enterprise AI is not a fine-tuning problem. It is structural and new research published through early 2026 is making that case more precisely than ever.

The benchmark problem itself has evolved. For years, the field measured text-to-SQL progress against Spider 1.0, which uses databases averaging fewer than ten tables with sensibly named columns. On Spider 1.0, GPT-4 with the DAIL-SQL framework achieved 86.6% execution accuracy results that gave many enterprise teams false confidence that the problem was largely solved. The BIRD benchmark (2023) forced a correction: using dirty real-world data across 95 databases, it showed GPT-4 at roughly 55% accuracy against a 93% human baseline, a 38-point gap that held even as newer models arrived.

The benchmark community has continued moving the goalposts toward enterprise reality. LiveSQLBench, released by the BIRD team in May 2025 and designed to be contamination-free and continuously updated, tests models against databases spanning end-user scale (around 127 columns) up to industrial-scale schemas with 1,340-plus columns each. On the base lite version the easiest tier the best-performing model, o3-mini, achieves only 47.78% success. A cluster of top models including GPT-4.1, o4-mini, and Gemini 2.5 Flash with thinking lands in the 37–45% range. On the industrial-scale version, with schemas averaging around 54 tables and 1,000-plus columns, prompt token counts alone average 84,000 per query, and accuracy degrades further still. For these schemas, the benchmark also introduces “Business Rule Drift” external knowledge that changes and contains inconsistencies across releases specifically to model the reality of enterprise environments where documentation and data definitions do not stay in sync.

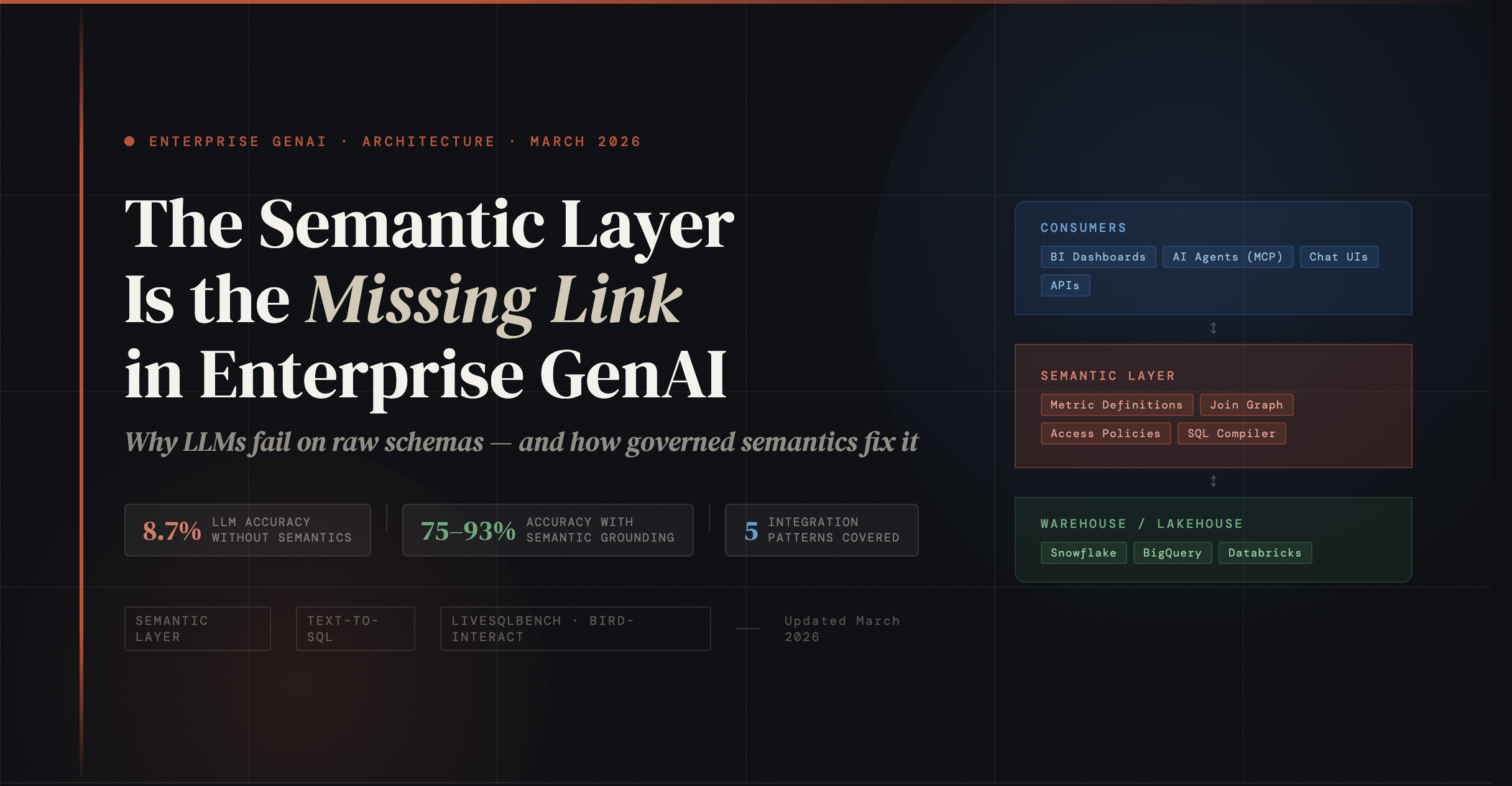

BIRD-Interact, accepted as an oral paper at ICLR 2026, marks the most significant methodological advance in this space. Rather than testing single-turn query generation, it evaluates multi-turn database interactions the agentic, conversational workflows that real enterprise AI systems must actually execute. BIRD-Interact couples each database with a hierarchical knowledge base, metadata files, and a function-driven user simulator, allowing models to ask clarifying questions, retrieve context, and recover from execution errors autonomously across up to 11,796 dynamic interactions per evaluation run. The results are stark: GPT-5 (Medium) completes only 8.67% of tasks in the conversational setting and 17% in the agentic setting on the full 600-task suite. The best single-turn models which can score adequately on traditional BIRD collapse when interaction, ambiguity resolution, and error recovery are required simultaneously. The benchmark’s conclusion is unambiguous: single-turn accuracy on clean benchmarks is a poor proxy for enterprise-grade reliability.

A separate OpenReview benchmark published in late 2025, focused specifically on enterprise SQL debugging rather than generation. It finds that even state-of-the-art reasoning models struggle. Enterprise SQL in production averages over 140 lines with abstract syntax trees of considerable depth. Claude Sonnet 4 achieves only 36.46% success on one variant of this benchmark; most models fail to reach 20%. The benchmark team identifies a fundamental issue with self-correction in their test set, 97% of incorrect SQL queries produce no execution error, meaning self-debugging loops have no signal to work with. The model cannot detect that its SQL is wrong because it runs without complaint and returns plausible-looking data.

There is an important nuance here that deserves fair treatment. MotherDuck published an experiment in February 2026 in which frontier models Claude Opus 4.5, GPT-5.2, and Gemini 3 Flash connected via MCP to a database achieved 95% accuracy on the BIRD Mini-Dev split without any semantic layer. Their analysis makes a real point on BIRD Mini-Dev, databases average seven tables (none more than 13), joins are mostly one-to-many, and schemas can be understood in minutes. Under realistic LLM-as-judge scoring rather than strict byte-for-byte execution accuracy, frontier models perform well on clean, small schemas. The conclusion they draw that well-modeled data is itself a semantic layer is not wrong. It is, however, a result that holds precisely where enterprise schemas are not small, clean, well-documented, and few-table. LiveSQLBench exists because the research community recognized that BIRD Mini-Dev no longer taxes real enterprise conditions.

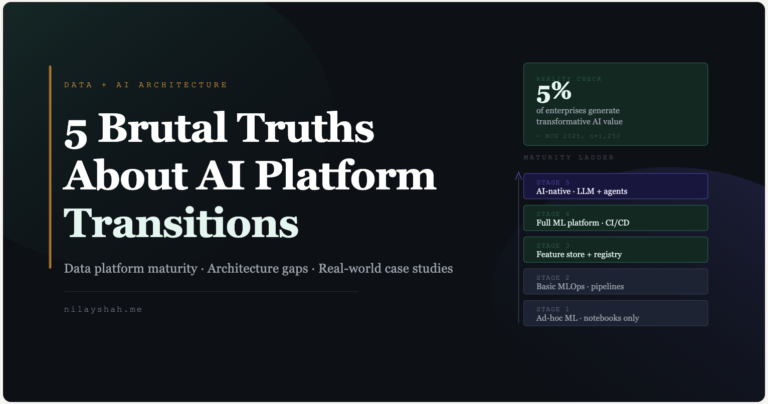

The real failure profile for production enterprise AI in early 2026 looks like this. McKinsey’s 2025 State of AI survey found that 71% of organizations report regular GenAI use, but only 17% attribute more than 5% of EBIT to it a sharp signal that demos are not translating to reliable production value. Enterprise architects reflecting on 2025 deployment experience estimate that approximately 7 in 10 GenAI projects never moved past the pilot stage, with the primary causes being hallucinations in production, data governance gaps, and schema complexity that proved incompatible with LLM-native SQL generation. RAG pipelines face a parallel credibility problem each stage embedding, retrieval, context assembly, generation can fail independently, and production incidents typically involve multiple stage failures compounding silently before a user reports a wrong answer.

The four failure modes that remain structurally persistent regardless of model generation are: faulty joins on ambiguous foreign-key relationships, aggregation mistakes caused by filter logic applied at the wrong grain, missing filters because business rules are implicit in organizational convention rather than schema documentation, and hallucinated column names when the model guesses at schema elements not present in the retrieved context. These are not capability failures of any particular LLM. They are the predictable outcome of asking a language model to reconstruct business meaning from technical structure without an explicit, governed bridge between the two.

What the semantic layer actually does

The semantic layer is a metadata abstraction that sits between physical data storage and the tools or agents that consume data. It translates the technical representation tables, columns, join keys into business-meaningful concepts: metrics, dimensions, relationships, hierarchies, and access policies.

The concept was formalized in BI tooling during the early 2000s (Business Objects, Cognos, MicroStrategy all had some version of it) but it was Looker’s LookML, introduced around 2012–2013, that brought the idea into the modern code-first era. Instead of GUI-configured metadata inside a proprietary BI tool, LookML was a version-controlled, code-based definition of business logic. You defined measures, dimensions, explores (join paths), and access_filters in YAML-like syntax, committed them to git, and deployed. Every LookML-based dashboard and query ran through the same definitions.

dbt’s MetricFlow, which became the engine of the dbt Semantic Layer, extended this idea to the transformation layer itself. MetricFlow lets teams define metric entities, measures, dimensions, and join relationships directly alongside their dbt models, in version-controlled YAML. A metric like monthly_recurring_revenue gets a single definition, its aggregation logic, its grain, its dimensions, its time window semantics and MetricFlow compiles queries against it into optimized, warehouse-specific SQL at query time. Cube, an open-source semantic layer with a different architecture, achieves similar ends through a data model layer that can be queried via REST, GraphQL, or Postgres-compatible SQL, with a pre-aggregation engine for performance.

The critical architectural point is that these tools do not store data. They compile. MetricFlow, Cube, and AtScale generate SQL at query time and push it down to the warehouse for execution. The semantic layer is a compiler and a contract, not a database. The same definitions that serve a Looker dashboard serve a Jupyter notebook, a Slack integration, and now crucially an AI agent.

What this gives an AI system that raw SQL generation cannot is threefold. First, unambiguous definitions “revenue” has one meaning, expressed in code, tested, and agreed upon by the business. Second, constrained query space instead of the full vocabulary of SQL against an arbitrary schema, the LLM works with a curated set of named metrics, dimensions, and filters. Third, enforced access control row-level security, column masking, and team-level data policies apply uniformly to every query whether submitted by a BI dashboard, a notebook, or an AI agent.

How the semantic layer grounds AI

The practical mechanism by which the semantic layer improves AI accuracy is a shift in what the LLM is asked to do. Instead of generating arbitrary SQL against a raw schema which requires the LLM to understand table relationships, column semantics, join logic, and aggregation rules simultaneously it generates a structured, constrained request against a named set of metrics and dimensions. The semantic layer’s SQL compiler handles everything else deterministically.

Cube’s engineering team describes it directly when an LLM generates a Cube API request rather than raw SQL, it is choosing from named semantic objects entities as nouns, dimensions as adjectives, measures as quantitative descriptions. “It is much less likely to make a technically valid hallucination” their documentation states, because the query surface is bounded. AtScale takes a comparable approach LLMs generate queries against a virtual single logical table comprising KPIs and metadata as columns. The query engine invisibly handles all physical complexity joins, aggregation, filtering in the translation to warehouse SQL.

The accuracy improvements when semantic grounding is applied are substantial. AtScale’s internal benchmarks on 40 TPC-DS business questions show accuracy jumping from 20% to 92.5% when queries pass through the semantic layer a 4.6x improvement, with accuracy on high-complexity queries going from 10% to 100%. Research published by data.world and Sequeda et al. (2023) found that enriching prompts with knowledge graph context improved GPT-4 zero-shot NL-to-SQL accuracy on enterprise insurance data from 16.7% to 54.2% a 3.2x improvement. Google’s internal testing shows Looker’s LookML layer reducing data errors in Gemini natural language queries by approximately two-thirds. dbt Labs reports a 3x accuracy improvement in their “Ask dbt” chatbot when grounded in the Semantic Layer versus an ungrounded baseline.

The governance benefits are equally significant. When access policies are defined in the semantic layer and enforced at query compilation time, they apply to AI agents exactly as they apply to dashboards. An agent operating on behalf of a sales analyst cannot accidentally retrieve finance data, not because of a prompt-level instruction that can be overridden, but because the semantic layer never generates SQL that crosses the access boundary. Cube embeds security context in JWT tokens that flow through the entire chain from LangChain agent to API to warehouse execution. AtScale explicitly notes that its natural language query product operates without exposing any underlying data to the LLM only metadata and metric definitions are shared with the model.

The audit trail is equally important for enterprise deployments. Every query, whether from a dashboard or an AI agent, is logged with lineage from prompt to evidence. This is not a nice-to-have. It is increasingly a compliance requirement.

How the integration actually works in practice

Five distinct integration patterns have emerged for connecting semantic layers to AI systems, each with different trade-offs.

Text-to-semantic-API (Cube, Bonnard) is the most constrained and most accurate pattern. The user’s natural language query goes to an LLM with semantic metadata in context. The LLM produces a structured JSON API request against named semantic objects. The semantic layer compiler translates this deterministically into SQL. The LLM writes no SQL at all. The constraint is coverage queries outside the semantic model fail.

Semantic-enriched text-to-SQL (AtScale, Snowflake Cortex Analyst) gives the LLM a YAML semantic model specification metric definitions, dimension descriptions, synonyms, sample values, verified queries and asks it to produce a simplified query against a virtual logical table. The semantic engine handles physical SQL translation. This allows more query flexibility while dramatically reducing the LLM’s error space compared to a raw schema.

MCP-based agent architecture (dbt, ThoughtSpot, AtScale, Looker) uses Anthropic’s Model Context Protocol to expose semantic layer capabilities as discoverable tools. An AI agent connects to an MCP server and discovers tools like list_metrics, get_dimensions, and query_metrics. It selects tools autonomously based on user intent. This is the most composable pattern and works across any MCP-compatible AI client. dbt’s MCP Server, which became generally available in late 2025, is the clearest production example of this approach.

LangChain/RAG integration (Cube + LangChain) loads semantic layer metadata into a vector store. When a user asks a question, semantic search retrieves relevant metric definitions and schema context, which are injected into the LLM prompt. The LLM generates a Cube API query. Security context flows through the chain via JWT tokens. This pattern works well for conversational agents that need both document retrieval and structured analytics.

Platform-native semantics (Snowflake Cortex Analyst + Semantic Views, Databricks Genie + Metric Views, Gemini in Looker) embeds semantic definitions as first-class objects within the data platform. No external middleware is required. The platform’s AI service reads semantic definitions and generates SQL using platform-hosted models. The trade-off is depth of lock-in, but the operational simplicity is real.

Where the market stands and where it is going

The semantic layer market has split into three structural camps that are now converging toward a common protocol layer.

Headless/universal semantic layers dbt MetricFlow, Cube, AtScale operate independently of any BI tool or data platform. They support multiple warehouses, multiple consumers (BI tools, notebooks, AI agents, embedded apps), and sit in the critical path for any query. Their value proposition for AI is that a single governed semantic model serves both traditional analytics and AI-powered interfaces from one definition.

Platform-native semantics Snowflake Semantic Views, Databricks Unity Catalog Metric Views are embedded inside the platform. Snowflake’s Semantic Views became generally available in mid-2025 and integrate directly with Cortex Analyst. Databricks Metric Views feed into the Genie natural language query interface. Zero additional infrastructure. Tight integration. Platform lock-in.

BI-embedded semantics LookML, Power BI Tabular, ThoughtSpot TML continue to evolve. Looker connected its LookML semantic layer to Gemini via an MCP server in early 2026. ThoughtSpot released Spotter Semantics in early 2026, a knowledge graph integrating business logic, security rules, and metric definitions for AI agent consumption. These tools differentiate on their deterministic query execution Spotter uses patented search tokens rather than probabilistic LLM SQL generation.

The standards question is now live and consequential. The Open Semantic Interchange (OSI) initiative, founded by Snowflake and joined by dbt Labs, Salesforce, ThoughtSpot, Cube, AtScale, Collibra, and others, released its v1.0 spec in January 2026. OSI aims to make semantic definitions portable across tools define a metric once, export it to any platform. AtScale’s open-source Semantic Modeling Language (SML) and its converters between Snowflake, Databricks, Power BI, and SML represent the most mature translation tooling available today. MCP is rapidly becoming the de facto protocol for connecting semantic layers to AI agents; every major semantic layer vendor has shipped an MCP server.

Real, bidirectional semantic portability remains 12–18 months away. But the direction of standardization is clear, and its driver is AI. The problem of multiple teams defining “revenue” differently, which existed before GenAI, has become a blocking issue now that AI agents answer revenue questions at scale.

AI agent connectivity standard

(Anthropic / Linux Foundation, Nov 2024)

Cross-platform metric portability

(Snowflake, Salesforce, dbt — v1.0 Jan 2026)

Open-source interchange format

(AtScale, bi-directional converters — Sep 2024)

The real trade-offs and what critics get right

The semantic layer does not solve enterprise AI’s problems. It constrains them to a manageable surface area. That distinction matters.

The coverage gap is fundamental. The semantic layer is a bounded abstraction it can only answer questions about what has been defined. Jacob Matson of MotherDuck makes the argument directly current semantic layers still operate on the premise that a human must define every metric, every dimension, and every relationship before a question can be asked. The long tail of business questions remains unanswered. When a user asks something outside the model, the system fails silently or falls back to raw SQL generation with all its attendant problems. This is not a solvable problem at launch, it is a permanent maintenance reality.

Maintenance burden is the most consistent adoption barrier. dbt Labs themselves have noted that maintenance overhead increases when the semantic layer is not integrated tightly with existing workflows. Semantic layers require ongoing investment as the business changes, definitions must change. Neglected semantic models accumulate “semantic dust” stale metrics, missing dimensions, deprecated entities that silently produce wrong answers. The organizations that succeed treat the semantic layer as living infrastructure, not a governance project with a finish line.

There are legitimate technical objections to current implementations. Some practitioners argue that modern semantic layers are not truly semantic they attach labels to technical objects rather than modeling genuine ontological relationships between business concepts. Metrics defined in LookML can silently diverge from metrics defined in Power BI Tabular or Cube for the same business concept. Without OSI-grade interoperability, these “semantic silos” are a real structural risk in multi-tool enterprises.

There is also a valid counter argument from the data modeling direction. MotherDuck’s experiments show frontier LLMs achieving 94–95% accuracy on the BIRD benchmark using clean, well-named schemas via MCP with no dedicated semantic layer. The conclusion drawn is that good data modeling is the semantic layer; if your tables are clean, named correctly, and documented, you may not need additional abstraction. The important caveat BIRD databases average seven tables. Enterprise schemas have hundreds to thousands. The argument holds in small, clean environments and breaks down at enterprise scale precisely where AI ambitions are highest.

Latency and operational complexity are real costs. Standalone semantic layers add network hops and compilation overhead. Cube’s pre-aggregation engine brings hot queries to single-digit milliseconds, but cold queries still hit the warehouse. Platform-native approaches (Snowflake Semantic Views, Databricks Metric Views) add zero execution overhead at the cost of portability.

The honest framing is this within the boundary of the semantic model, accuracy and consistency improve dramatically. Outside that boundary, you are back to raw LLM SQL generation. The architectural question is whether the highest-value analytics questions in your enterprise can be modeled and whether the investment in modeling them is worth the accuracy and governance gains. For most large enterprises, the answer is clearly yes for the metrics that actually drive decisions. For the long tail, the semantic layer is not the answer, and pretending it is will lead to under-engineered fallback paths.

outside the model

unstructured

requests

8.7–40% accuracy

- High-value, high-frequency metrics (revenue, churn, CAC, utilization)

- Consistent definitions — same answer every time

- Access-controlled, auditable, version-controlled

- Served by semantic layer + LLM deterministically

- AI accuracy: 75–93% (AtScale TPC-DS, Google LookML)

- Ad hoc, exploratory, or domain-specific queries

- No pre-defined metrics or dimension paths

- Falls back to raw LLM + schema context

- AI accuracy: 8.7–40% (BIRD-Interact ICLR 2026 at low end)

- Requires human analyst or deferred modeling

A lucky architecture, not an invented one

The semantic layer was not designed for AI. It was designed because business users could not write SQL, and the data teams building star schemas and BI tools in the 2012–2020 era recognized that business logic should live in code rather than in analyst spreadsheets. LookML, dbt, MetricFlow, Cube these tools existed and matured before the term “large language model” entered common usage.

What makes the current moment interesting is that LLMs turn out to have the same problem as business users they cannot reliably write SQL against ambiguous, undocumented enterprise schemas. The abstraction that helped a marketing analyst in 2016 avoid writing a bad join now helps Claude avoid hallucinating a revenue calculation in 2026. The semantic layer was designed for one problem and maps almost perfectly onto another. That is not a product pitch it is an architectural observation worth taking seriously.

Gartner elevated semantic modeling from optional to “essential infrastructure” in its 2025 Hype Cycle, explicitly because of AI. The OSI initiative, bringing together Snowflake, Salesforce, dbt Labs, and a dozen others around a shared interchange format, is the clearest signal that the industry has reached the same conclusion. The problem of inconsistent metric definitions, which data teams have been arguing about since the first multi-team data warehouse, has become a blocking problem for enterprise AI at scale.

The organizations that will get reliable value from AI analytics are not the ones that built the most sophisticated RAG pipelines or fine-tuned the largest models. They are the ones that invested in making their data semantically consistent that defined “revenue” once, in code, in a place every consumer including AI agents can read. That investment was worth making before AI. It is worth making now for different and more urgent reasons. The architecture was always there. The AI era has simply made ignoring it too expensive.