How to Build a Production AI Platform Extending Data Platform

Last time I talked about right approach to take AI projects from PoC to Production. Let’s talk about AI Platform now!

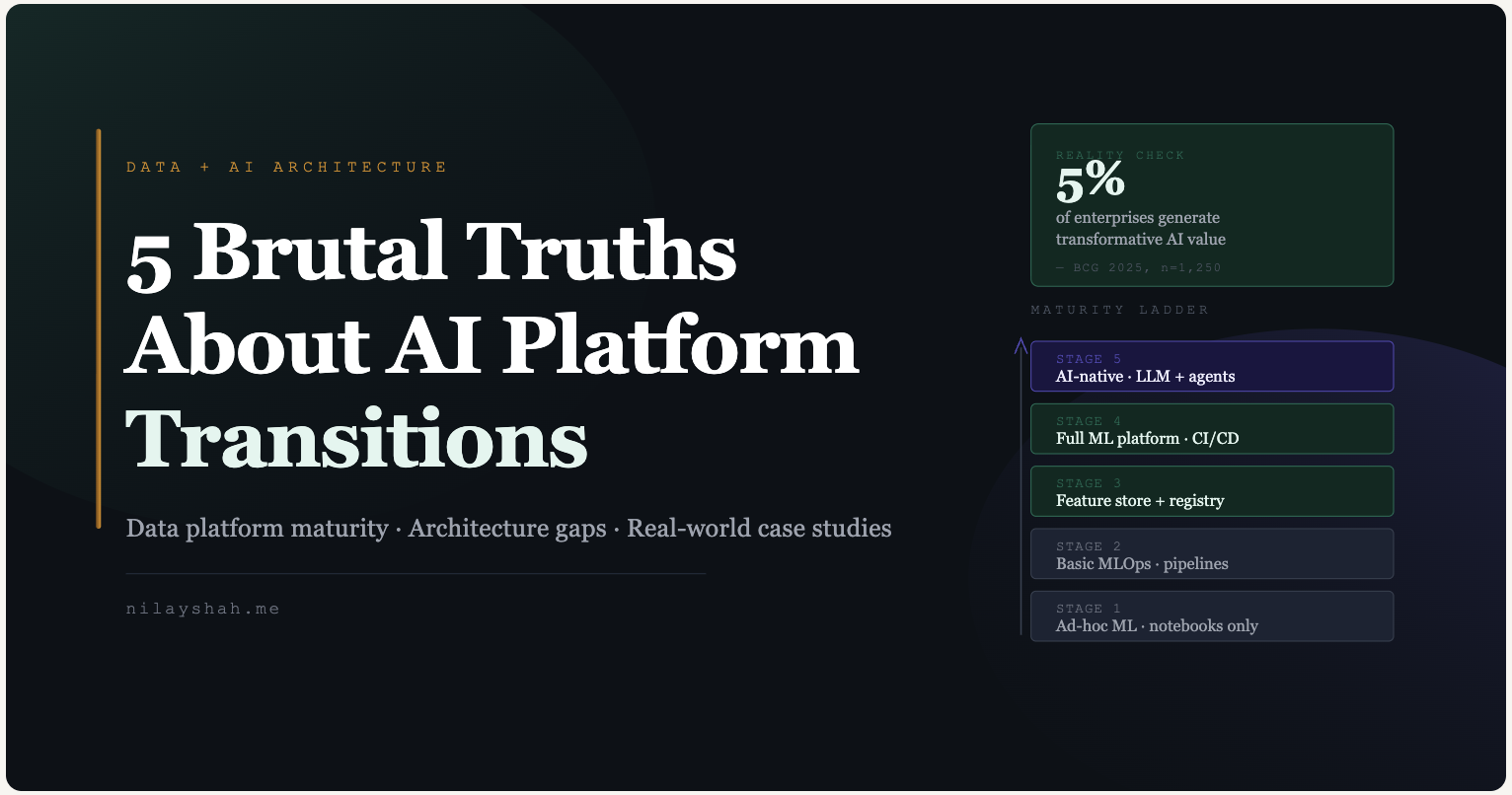

Only 5% of enterprises generate transformative value from AI despite near-universal adoption and $1.5 trillion in global spending. The gap between AI investment and AI returns has widened into a chasm: 78% of organizations now use AI in at least one business function, yet 88% of AI pilots never reach production, and 42% of companies abandoned most AI initiatives in 2025, up from 17% in 2024. The root cause is not model quality or compute access. It is the invisible infrastructure beneath the models: ungoverned data, missing semantic layers, and organizations that treat AI as a technology deployment rather than a AI Platform transformation. This post maps the concrete architectural, organizational, and strategic shifts required to move from a functioning data platform to a production AI Platform, grounded in what Netflix, Uber, Stripe, Pinterest, and Meta actually built.

The $1.5 trillion paradox: everyone invests, almost nobody scales

The enterprise AI investment landscape in 2025 paints a picture of extraordinary spending against underwhelming returns. Gartner pegged worldwide AI spending at $1.5 trillion in 2025, forecasting $2.52 trillion for 2026, a 44% year-over-year increase. Enterprise GenAI spend alone hit $37 billion in 2025, a 3.2x jump from $11.5 billion in 2024, according to Menlo Ventures. The U.S. accounts for 76% of global AI infrastructure spending, with private AI investment reaching $109.1 billion, nearly 12x China’s $9.3 billion, as reported in the Stanford AI Index 2025.

Yet the returns tell a different story. BCG’s 2025 study of 1,250 firms found that only 5% are “future-built” companies generating transformative AI value. Sixty percent report minimal or no material value despite substantial investment. MIT’s NANDA report put the GenAI pilot failure rate at 95%. Only 14% of CFOs surveyed by RGP said they have seen clear, measurable impact. Forrester found that fewer than one-third of AI decision-makers can tie AI value to P&L changes.

Where is the money actually going? Roughly half of GenAI spending ($19 billion) flows to the application layer, covering copilots, coding tools, and vertical AI, while the other half ($18 billion) goes to infrastructure. Coding tools alone represent $4 billion, making software development the breakout AI use case. Healthcare leads vertical AI spend at $1.5 billion, more than the next four verticals combined. The buy-versus-build ratio has shifted dramatically: 76% of AI solutions are now purchased externally, up from 53% in 2024, as enterprises retreat from building custom systems they cannot maintain.

BCG’s 10-20-70 rule captures the misallocation precisely: top-performing organizations dedicate 10% of effort to algorithms, 20% to data and technology, and 70% to people, processes, and cultural transformation. Most enterprises invert this ratio, pouring resources into models while starving the organizational and data foundations that determine whether those models ever reach production AI Platform.

Where most data platforms actually stand today

Understanding where an organization sits on the data platform maturity curve is the first prerequisite for any AI Platform transformation. Multiple frameworks converge on a consistent finding: most enterprises cluster at maturity levels 2 to 3 out of 5 (far short of the foundation an AI platform requires), with basic governance in place but systematic quality monitoring, semantic layers, and AI-readiness still absent.

Gartner’s Enterprise Information Management maturity model distributes organizations across five levels: Aware, Reactive, Proactive, Managed, and Optimized. Their benchmark data shows 34% of organizations at Level 3 (Proactive) and 31% at Level 4 (Managed), with only 9% reaching the Optimized level and fewer than 5% achieving the highest maturity. The practical markers of each stage are instructive:

Level 1 (Aware): Organizations rely on spreadsheets for data inventory, have no formal data owners, and fix quality issues reactively after they surface in downstream reports.

Level 2 (Reactive): A data catalog exists in name, but coverage is partial and manually maintained. Policies are documented but not enforced. Data quality is measured only for critical pipelines after failures.

Level 3 (Proactive): Enterprise-wide governance policies are documented and applied to high-value datasets. A data catalog covers 40 to 60% of assets. Basic automated quality rules exist but monitoring is not continuous.

Level 4 (Managed): Automated quality controls operate at scale with metrics-driven governance. Data lineage is tracked end-to-end. Most business-critical datasets have owners, SLAs, and quality thresholds.

Level 5 (Optimized): Data is treated as a strategic asset with federated governance, real-time quality monitoring, and self-service capabilities covering over 90% of data assets. AI-readiness checks are embedded in data product development.

Databricks frames data platform maturity through a three-phase lakehouse journey: Establish, Scale, and Autonomy, anchored by the medallion architecture (Bronze to Silver to Gold) and governed through Unity Catalog. Snowflake offers a parallel three-phase model: Migrate, Modernize, Monetize, progressing from basic warehousing to data products and clean rooms. Monte Carlo’s data quality maturity curve maps the operational dimension that most frameworks underweight, moving from Crawl (manual testing) to Walk (automated observability) to Run (cross-functional coordination with data contracts). dbt Labs’ Analytics Development Lifecycle whitepaper reframes maturity around software engineering principles: a mature analytics workflow requires elastic scale, automatic validation, auditability, and the ability to scale from experimental to mission-critical without re-architecting.

The most telling statistic comes from dbt Labs’ 2024 State of Analytics Engineering survey: 57% of practitioners cite poor data quality as their predominant challenge, and most spend the majority of their time organizing datasets rather than analyzing them. This is the foundation on which enterprises are attempting to build AI systems, and it explains the 88% pilot failure rate.

The architectural leap from data platform to AI Platform

The transition from “we have a data platform” to “we have an AI Platform” is not an upgrade. It is an architectural phase change. A traditional data platform moves data at rest toward analytics: source systems feed ETL pipelines, which populate a centralized warehouse, which powers BI dashboards. An AI Platform must support data in motion for intelligence: real-time feature computation, embedding generation, vector search, model serving at millisecond latency, and continuous evaluation of probabilistic outputs. Gartner projects that by 2026, 80% of enterprises will have adopted a modern data platform architecture driven by AI needs, and BI will represent less than 50% of data platform usage.

The new architectural components required fall into several distinct layers.

Feature stores (Feast, Tecton, Databricks Feature Store) solve the training-serving skew problem by providing a single data access layer for both offline model training and online inference. Uber’s feature store Palette hosts over 20,000 features. Stripe built Shepherd on open-source Chronon to power fraud detection with 200-plus features per model.

Vector stores (Pinecone, Weaviate, Milvus, pgvector) enable semantic search for RAG architectures, though the market is converging toward vector search as a capability within existing platforms rather than as standalone databases.

Model registries (MLflow, Weights and Biases) manage the lifecycle from experiment to staging to production with full versioning and lineage. Without a model registry, teams lose track of which version is serving in production and why performance changed.

LLM gateways provide unified API interfaces across multiple LLM providers, with intelligent routing by task complexity, token-aware rate limiting, automatic failover, and cost attribution by team. Practitioners consistently describe this component as essential once LLM spend exceeds roughly $3,000 per month or spans three or more services.

RAG infrastructure has emerged as perhaps the most critical and most misunderstood component. A production RAG system requires a document ingestion pipeline, an embedding service, a vector store, a retrieval layer with metadata filtering, an LLM inference layer, and guardrails for content safety and PII detection. Each layer introduces a failure mode that teams routinely attribute to the model instead of the AI Platform pipeline.

LLMOps pipelines differ fundamentally from traditional MLOps: cost concentrates in inference rather than training, evaluation is semantic rather than metric-based, and drift manifests as behavioral change rather than statistical distribution shift. Prompt management, as Chip Huyen warns, will become like maintaining 700-line SQL queries that nobody dares touch.

The organizational shift is equally profound. Netflix’s ML Platform team provides what they describe as an entire ecosystem of tools around Metaflow, empowering data scientists to be self-sufficient from prototyping to production. Uber’s Michelangelo operates as ML-as-a-service with a centralized platform team plus embedded ML engineers per product team. The team structure evolves from data engineers managing pipelines to a tripartite model: ML platform engineers building infrastructure, ML engineers deploying models, and Responsible AI teams handling governance, ethics, and compliance. A new function, FinOps for AI, is also emerging to manage the economics of token-based LLM costs and GPU compute.

The five stages of AI Platform maturity

Google’s foundational MLOps maturity model (Levels 0 to 2) remains widely referenced, but its three levels compress too much ground for enterprises navigating incremental progress. Synthesizing Google’s model with Microsoft’s five-level framework, MIT CISR’s four-stage enterprise AI model, and Gartner’s AI maturity levels produces a more actionable five-stage AI Platform maturity progression.

Stage 1: Ad-hoc ML. Every step is manual. Data scientists work in Jupyter notebooks, models are handed off to engineering teams for custom integration, and there is no monitoring of model performance in production. Models fail silently when data changes. This is where most enterprises begin and where many remain.

Stage 2: Basic MLOps. Training pipelines are deployed rather than just trained models. Experiment tracking begins via MLflow or Weights and Biases, and continuous training triggers when new data arrives. The leap from Stage 1 to Stage 2 is enormous, and practitioners routinely underestimate its difficulty.

Stage 3: Feature platform and model registry. Centralized feature stores, model registries with lineage tracking, automated retraining schedules, and A/B testing infrastructure are operational. Dedicated AI platform teams form. The organization can now run multiple models in production simultaneously without chaos.

Stage 4: Full ML platform. This corresponds to Google’s Level 2. CI/CD is applied to ML pipeline code itself. Real-time inference operates at sub-millisecond latency. Sophisticated monitoring and alerting cover model performance, data drift, and system health. Self-service capabilities allow model owners to be fully autonomous from feature engineering through deployment.

Stage 5: AI-native platform(AI Platform). Traditional ML and generative AI are integrated under a unified platform. LLM gateways, RAG infrastructure, agent orchestration frameworks, continuous LLM evaluation, and self-healing systems are operational. MIT CISR estimates only 7% of enterprises reach this final stage.

The financial stakes of progression are quantified by BCG: future-built companies at the top of the maturity curve generate 1.7x revenue growth, 1.6x higher EBIT margins, and 3.6x greater three-year total shareholder return compared to companies still at the bottom.

What Netflix, Uber, Stripe, and Pinterest actually built

The most instructive case studies come not from vendor marketing but from engineering blogs and conference talks documenting multi-year platform journeys. Four companies offer particularly detailed and metrics-rich accounts of this AI platform transition.

Uber’s Michelangelo is the most thoroughly documented ML platform evolution, spanning three distinct phases across eight years. Phase 1 (2016 to 2019) focused on predictive ML for tabular data, launching XGBoost models for ETA, pricing, and risk, along with the Palette feature store now hosting 20,000-plus features. Phase 2 (2019 to 2023) made deep learning a first-class citizen through Canvas, their model-iteration-as-code abstraction, achieving a full unified developer experience where 60% of tier-1 models migrated to deep learning. Phase 3 (2023 to present) extended the platform for generative AI, adding LLM-powered customer service tooling and a GenAI API gateway. Today Michelangelo runs approximately 400 active ML projects, 20,000-plus training jobs per month, and 5,000-plus models in production, serving 10 million real-time predictions per second at peak. The platform’s founding engineers went on to create Tecton, which now powers feature platforms at Atlassian and Tide.

Netflix built its ML infrastructure around Metaflow, an open-source framework that reduced the median time from project idea to production deployment from four months to approximately one week. The design philosophy was explicitly human-centric: tools optimized for data scientist productivity rather than infrastructure team preferences. Netflix now runs hundreds of Metaflow projects across its AI platform, covering recommendations, content demand modeling, fraud detection, artwork personalization, and creative content creation, all containerized on Titus, their container management platform on AWS.

Pinterest’s MLEnv standardization offers the most dramatic before-and-after metrics. In 2021, the company had over 10 different ML frameworks with less than 5% of jobs running on any standard platform. By Q1 2023, 95% of ML jobs ran on MLEnv, training job volume increased 300%, and ML Platform Net Promoter Score jumped 43 points. The standardization on PyTorch, plus their Linchpin DSL that eliminated training-serving mismatches, proved that the AI platform layer, not the model layer, was the binding constraint on ML productivity. Pinterest now processes hundreds of millions of ML inferences per second across ads, recommendations, search, and trust and safety.

Stripe’s Radar demonstrates how a feature platform directly translates to business value. By adapting Airbnb’s open-source Chronon into their internal Shepherd platform, Stripe built a SEPA fraud model with 200-plus features that blocks tens of millions of dollars in additional fraud per year. Their Payments Foundation Model, trained on tens of billions of transactions, increased card testing detection from 59% to 97%. The ML flywheel pattern, where detection generates labels, labels produce features, features train new models, and new models deploy, is now a core competitive advantage across $1.4 trillion in annual payment volume.

A common pattern emerges across all four companies. Feature stores are universal infrastructure. Kubernetes plus Ray is converging as the standard ML compute layer. PyTorch dominates as the deep learning framework. All companies moved toward centralized ML platforms while permitting team-specific customization. And the typical end-to-end transformation takes four to six years across three generations of platform architecture.

The insight most practitioners miss

The most consequential finding for mid-journey enterprises is this: the semantic layer, not the model layer, is the missing link between data platform and AI Platform. Organizations that skip semantic layer work and jump straight to AI spend three times longer debugging outputs than building them. Without agreed-upon metric definitions, business context, and governed relationships between data entities, every AI application must reinvent its understanding of what the data means. Databricks acknowledged this directly when Unity Catalog expanded beyond governance into semantic foundations: the lakehouse alone has become table stakes.

This connects to a deeper pattern around data contracts. As Chad Sanderson, the architect of the modern data contracts movement, articulates: data quality issues never start with data, they start with code owned by a different team. Data contracts function as API-like agreements between software engineers who own services and data consumers, shifting quality enforcement to the point of data production rather than the point of consumption. The Publicis Sapient 2026 benchmark captures it starkly: “AI won’t fail for lack of models. It will fail for lack of data discipline.”

Three related tensions deserve attention from architects planning their AI Platform roadmap.

RAG is becoming the new ETL: messy, ungoverned, and brittle at scale. IBM’s SVP Dinesh Nirmal acknowledged that “pure RAG is not giving the optimal results that were expected.” Teams routinely blame the model when RAG fails, but the failure is almost always upstream: incomplete document repositories, outdated policies, inconsistent formatting, and no freshness monitoring. Most enterprises cannot answer a basic question like how quickly source changes propagate into indexes.

AI governance is already a crisis in motion. Komprise found that 54% of IT leaders rank AI governance as a core concern, nearly doubling from 29% in 2024, yet Cisco’s benchmark reveals only 12% of organizations describe their governance efforts as mature. Fewer than half have conducted ethical impact assessments. The organizational readiness for governing AI outputs is years behind the pace of AI adoption.

Most enterprises do not need custom models. Eugene Yan, now at Anthropic, argues that the model is not the product; the system around it is. A slightly worse model today will likely outperform a custom fine-tune built in the same timeframe, especially as LLM costs have dropped by two orders of magnitude in 18 months. The practical implication: invest in retrieval infrastructure, evaluation frameworks, and data products rather than training runs.

Zhamak Dehghani, creator of Data Mesh, offers perhaps the most provocative finding for architects: when AI agents receive small, focused context windows, task completion rates hit 85%. Give them large, unfiltered context and that drops to 45%. More data produces worse results. The modern data stack was built for human analysts who can tolerate ambiguity. AI agents need the right data, scoped precisely to the right domain. This inverts the traditional enterprise instinct to centralize and maximize data availability.

Where to begin

The AI transformation roadmap is not a model problem. It is a platform maturity problem with organizational dimensions that most enterprises systematically underweight. The companies that break through share three characteristics that have nothing to do with model sophistication. They built centralized feature platforms that eliminated training-serving skew. They invested in semantic layers and data contracts before investing in model complexity. And they treated the transformation as a four-to-six-year platform engineering program, not a twelve-month AI Platform initiative.

For architects mid-journey, the actionable sequence is: assess data platform maturity honestly against the established frameworks (Gartner EIM, DCAM, Monte Carlo’s quality curve), close governance and quality gaps before adding AI components, build the feature layer and semantic layer before building the model layer, and plan for LLMOps as a fundamentally different discipline than MLOps.

The overhyped investments, including custom model training, standalone vector databases, and general-purpose copilots, matter less than the underhyped ones: data contracts, retrieval governance, LLM evaluation infrastructure, and the organizational readiness that BCG’s 10-20-70 rule quantifies. The lakehouse is necessary but not sufficient. The model is powerful but not the product. The semantic layer, where data meets meaning, is where the next phase of enterprise AI will be won or lost.