AI Production Secrets – From Failed POC to Live System

I am coming back with my quality writing after long period of pause, my last post was about Federated data in analytics platforms and today I am focusing on the engineering discipline that gets AI systems to production.

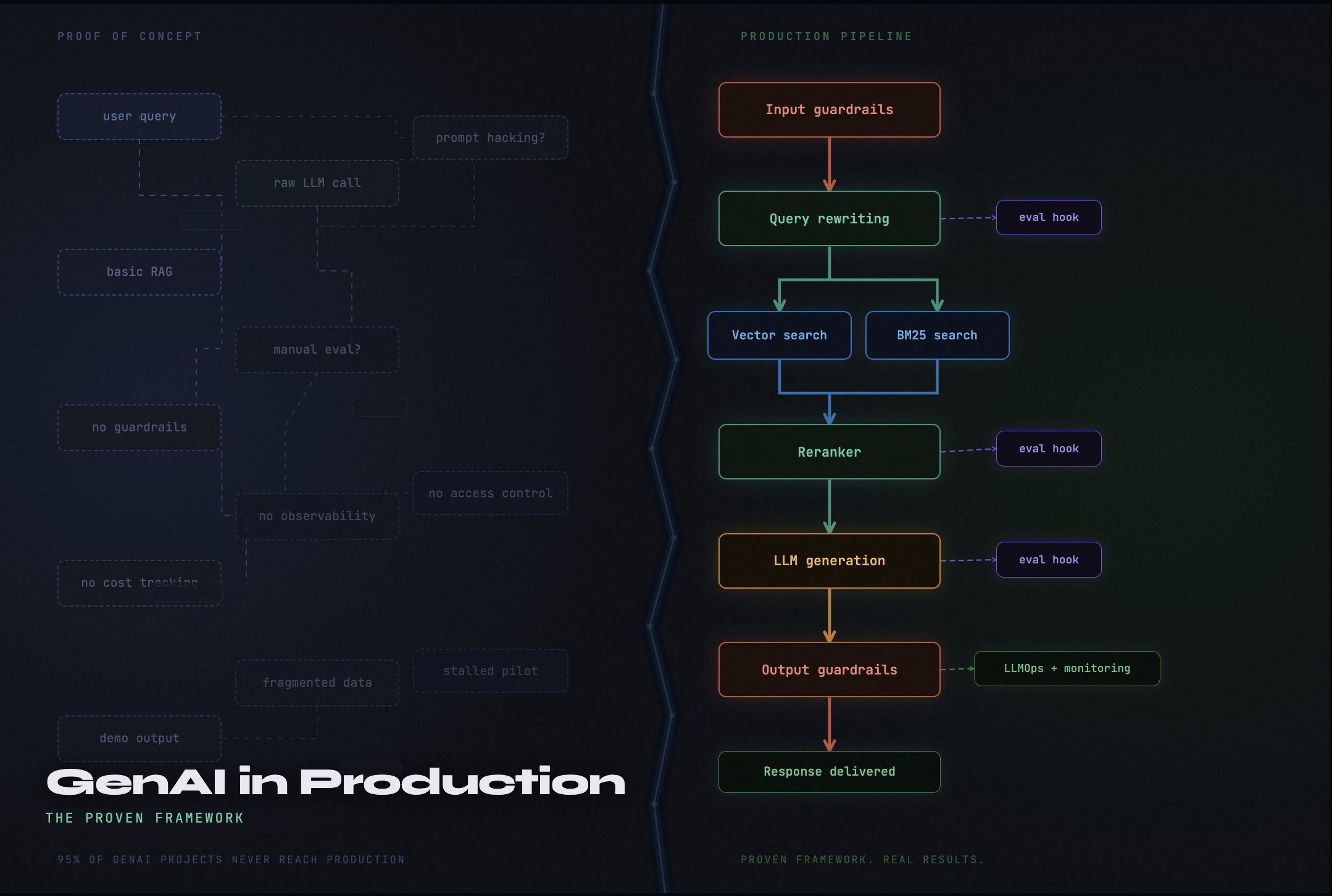

Most AI projects start the same way. A small team spins up a prototype in a few weeks. The demo goes well. Executives get excited. Someone calls it “transformative.” Then the engineering reality sets in and for the majority of organizations, the project quietly stalls somewhere between that first demo and the point where real users depend on it.

This pattern has a name now. Analysts call it “pilot purgatory”. McKinsey reports that nearly two-thirds of organizations are still stuck in pilot mode, unable to scale their projects across the enterprise. BCG’s research is even starker, finding that 60% of companies are reaping hardly any material value from their AI investments. Astrafy The MIT State of AI in Business 2025 report puts it more bluntly: 60% of organizations evaluated enterprise AI tools, but only 20% reached pilot stage and just 5% reached production. Sourceallies

The uncomfortable truth is that most AI failures are not model failures. The model usually works. What breaks down is everything around it the data, the architecture, the evaluation discipline, the operational scaffolding, and the organizational clarity about what “working” actually means.

This post is a framework for thinking through that gap from a coherent design language for the components that matter, to the operational practices that determine whether a system stays healthy once deployed.

Why the POC-to-Production Gap Exists

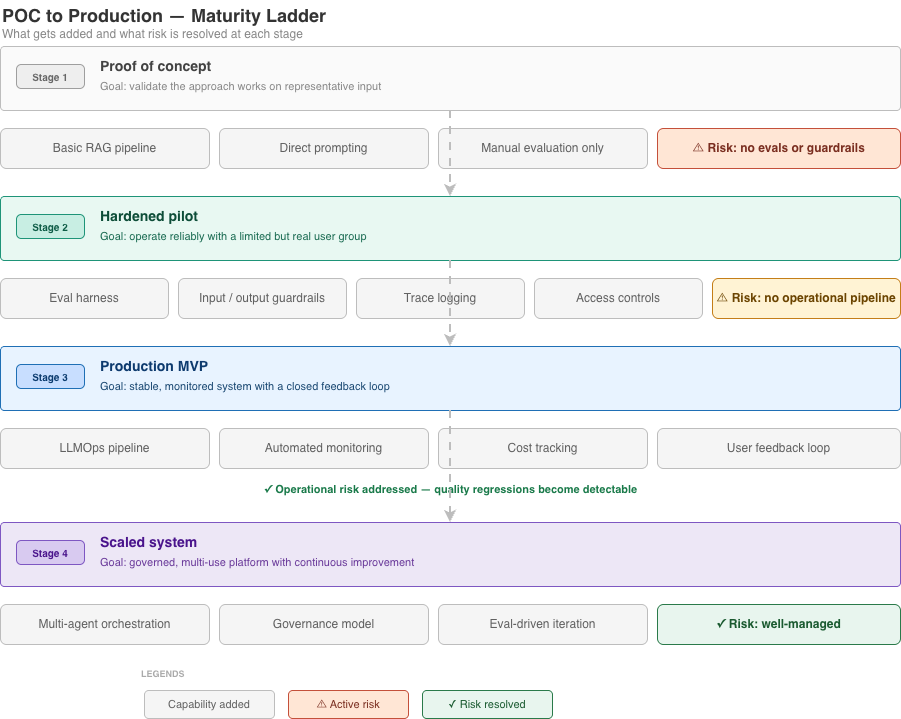

A proof-of-concept(and now proof-of-value for some org) is designed to answer one question: does this approach produce plausible output on representative input? For that purpose, a GPT wrapper over a few documents, a hardcoded prompt, and a demo environment is entirely sufficient. The demo looks good because it was constructed to look good. Edge cases were avoided. Latency didn’t matter. There was no real user, no sensitive data, no retry logic, no budget constraint, and no expectation that the output be consistently reliable.

Production answers a different set of questions: Does it work on inputs we didn’t design for? Does it degrade gracefully when context is incomplete? Can we detect when it’s wrong? Can we trace why it produced a particular output? Can we audit it? What does it cost to serve a million requests?

Reaching 80% quality happened quickly, but pushing past 95% required the majority of development time. This pattern where the final stretch from “demo quality” to “production quality” consumes disproportionate effort appears consistently across production deployments. ZenML

The gap is not a sign that the technology is immature. It is a sign that teams are applying production discipline too late. The organizations that close the gap are not necessarily the ones with the most sophisticated models. They are the ones doing the less glamorous engineering work: building evaluation pipelines, implementing guardrails, designing for uncertainty, and treating their LLM systems with the same rigor they would apply to any critical infrastructure. ZenML

A Design Language for AI Systems

One reason teams struggle to move from prototype to production is that they lack a shared vocabulary for the components they are building. Bharani Subramaniam and Martin Fowler’s pattern catalog published on martinfowler.com in February 2025 offers a useful starting point. It describes the architectural primitives that appear repeatedly in realistic GenAI systems: direct prompting, embeddings, retrieval-augmented generation (RAG), hybrid retrieval, query rewriting, reranking, guardrails, evals, and fine-tuning.

Rather than summarize each pattern in isolation, it is more useful to understand how they compose into a system and what each one is actually solving.

Direct prompting is the floor. You send a query to an LLM and receive a generated response. It works well for open-ended tasks, content generation, and summarization where the output doesn’t depend on proprietary knowledge. The moment you need the system to reason accurately about your organization’s specific data, direct prompting hits its ceiling quickly. The model’s training cut-off is fixed, its knowledge of your domain is generic, and it will hallucinate with confidence when context runs out.

Retrieval-augmented generation addresses this. Rather than relying on the model’s parametric memory, RAG retrieves relevant context at query time from an external knowledge base, then injects that context into the prompt before generation. The practical effect is that the model reasons over your data policy documents, product catalogs, support histories, financial records rather than guessing about it.

But basic RAG has its own limitations. Vector similarity search can retrieve technically close documents that are contextually irrelevant. The user’s query as written may not match the way the underlying documents were structured. Retrieved results are not weighted by importance before they reach the model.

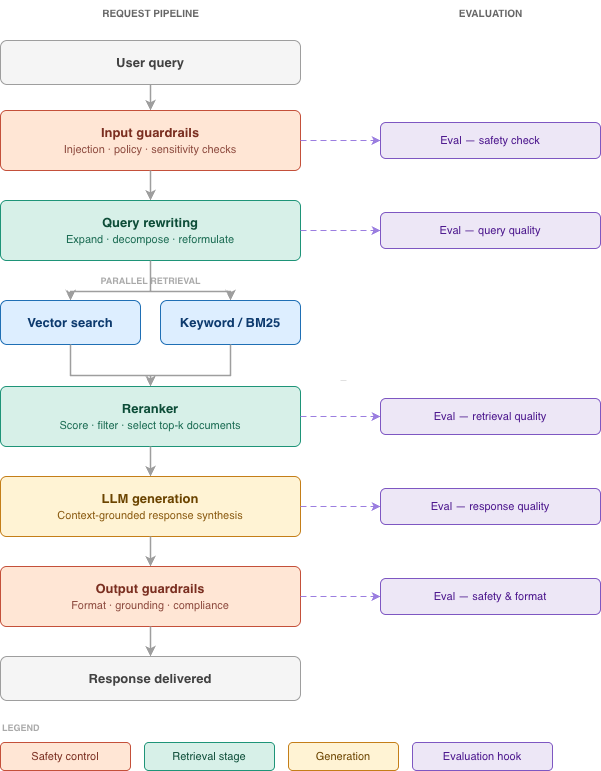

The realistic RAG pipeline addresses this through a layered set of component patterns: input guardrails, query rewriting, parallel hybrid retrieval, reranking, generation, and output guardrails. Martin Fowler Each pattern solves a specific failure mode.

Query rewriting addresses the vocabulary mismatch between how users ask questions and how documents are written. Before retrieval, the query is reformulated to improve recall expanding abbreviations, decomposing compound questions, or generating multiple variant queries in parallel.

Hybrid retrieval combines dense vector search (semantic similarity) with traditional keyword or BM25 search. Neither approach is universally superior: vector search handles semantic variation well but can miss exact terms, keyword search is precise for named entities but weak on meaning. The combination improves recall in most practical domains.

Re-ranking sorts retrieved results by relevance before they are passed to the model. It is an important quality gate the difference between the model reasoning from its five most relevant documents versus five documents that merely scored well in the initial retrieval pass.

Guardrails operate at the system boundary. GenAI systems are gullible, and can easily be tricked into responding in ways that are contrary to an enterprise’s policies or leak confidential information. Guardrails at the boundaries of the request/response flow counter this. Thoughtworks Guardrails are not just content filters. At the input layer, they screen for prompt injection, policy violations, and data sensitivity. At the output layer, they enforce format constraints, factual grounding checks, and compliance requirements. The cost is real extra LLM calls increase latency and spend but so is the risk of operating without them.

Evals are the evaluation harness that makes the entire system measurable. Evals play a central role in ensuring that these non-deterministic systems are operating within sensible boundaries. Evaluations can be used against the whole system, and against any components that have an LLM guardrails and query rewriting contain logically distinct LLMs and can be evaluated individually as well as part of the total request flow. Martin Fowler

Fine-tuning is the last resort in this stack, not the first move. It makes sense when your domain vocabulary is highly specialized, when the model needs to learn a particular output format, or when RAG consistently fails to surface the right context. Most teams reach for fine-tuning too early because it feels like a technical solution to what is often a data quality or retrieval design problem.

What Makes a System Production-Ready

Architecture is necessary but not sufficient. A well-designed component diagram can still produce a system that no one trusts in production because production readiness is ultimately about how the system behaves over time, under real conditions, with real users.

There are five dimensions worth examining systematically before any system goes live.

1. Evaluation Infrastructure

The single biggest predictor of production success is whether the team has built an evaluation harness before they need one. Evals serve the same function in GenAI systems that unit and integration tests serve in traditional software they allow you to change a component with confidence rather than hope.

The challenge is that LLM outputs are non-deterministic, which means traditional pass/fail test assertions are often too brittle. The practical approach is a mixture of automated scoring (using metrics like relevance, faithfulness, groundedness, and answer quality), LLM-as-judge evaluation (using a model to assess outputs at scale against criteria you define), and curated golden datasets for regression testing. The key is that these evaluation pipelines exist before deployment, not after the first production incident.

A common anti-pattern is treating evals as a one-time exercise at the end of development. In practice, evals need to run continuously triggered by prompt changes, model version updates, retrieval configuration changes, or shifts in the incoming query distribution.

2. Observability

GenAI systems fail in ways that traditional application monitoring was not designed to detect. Latency spikes are detectable. But a subtly degraded response one that is fluent and confident but factually incorrect is invisible to standard infrastructure monitoring.

Production GenAI systems need trace-level observability that captures the full request lifecycle: the original query, the rewritten query, what was retrieved, what context was assembled, what was sent to the model, what was returned, what guardrails ran, and what the final output was. Without this level of detail, debugging a quality regression becomes close to impossible.

LLM observability is the ability to correlate user intent, prompts, agent actions, model parameters, and infrastructure signals to explain why a system produced a specific output. Fractal This is a materially different discipline from traditional application logging, and it requires purpose-built tooling.

Cost visibility belongs in this category too. LLM costs spike when prompts grow, agent loops repeat, or governance controls are missing. Without token-level monitoring and policy enforcement, usage patterns escalate silently until costs become visible on monthly bills. Fractal

3. Data Readiness

The most common single cause of GenAI project failure is not model quality it is data quality. Gartner found that on average only 48% of AI projects make it into production, and the top obstacles cited in CDO surveys are data quality and readiness (43%), lack of technical maturity (43%), and shortage of skills and data literacy (35%). Informatica

The specific problem for GenAI systems is that “data quality” has a different meaning than it does for traditional analytics. For a GenAI system, data readiness means: are the documents in the knowledge base current? Are they cleanly chunked in a way that preserves meaningful units of context? Are metadata fields accurate and queryable? Does the access control model propagate from source systems through the vector store to the retrieval layer? Is there a pipeline to detect and update documents when the underlying source changes?

Most organizations discover these problems during RAG debugging, after deployment, when the system confidently retrieves an outdated policy document and generates a plausible but incorrect answer. Building the data pipeline with the same rigor as the inference pipeline is not optional it is foundational.

4. Governance and Guardrail Architecture

There is a tendency to treat governance as a compliance checkbox something added to satisfy a legal or security review. This is the wrong framing. Governance needs to be embedded in the system architecture, not bolted onto it afterward.

For production GenAI systems, this means identity-aware access controls at the retrieval layer (users should not be able to retrieve documents they are not authorized to see via semantic search), input and output guardrails as first-class system components, audit logging for model interactions, and clear escalation paths for outputs that require human review.

The threat surface in enterprise GenAI is broader than many teams initially appreciate. Prompt injection, where user-crafted input overrides system instructions are not a theoretical vulnerability. Context overflow attacks, where a malicious input causes safety instructions to be pushed out of the context window, are documented in production deployments. Architecture-level controls matter more than prompt-level controls alone, because prompts are stateless and users are creative in unexpected ways.

5. Change Management

Perhaps the most underestimated dimension of production readiness is organizational. Human factors including skills gaps, workforce resistance, and cultural barriers compound the challenge. Many AI initiatives stall not because of flawed algorithms but because of the people and processes surrounding them. ComplexDiscovery

What does this look like in practice? Teams often have well-defined success metrics for the demo (“achieves 90% accuracy on test set”) but undefined criteria for production value (“reduces analyst review time by X%”). These are different questions, and failure to define the latter means the project has no grounded standard for success. Stakeholders who can’t align on success criteria in week one will still be debating them in month six, except now significant time and budget have been spent chasing an undefined target. Sourceallies

The POC as a Production Blueprint

The most practical reframe available to engineering teams is this: design the POC as if you intend to ship it.

This does not mean building full production infrastructure for a prototype. It means making architectural decisions that will survive contact with production requirements using the same retrieval patterns you plan to run in production, instrumenting the prototype with basic eval hooks from the start, identifying the sensitive data boundaries early, and defining what “good output” means before you build the evaluation harness to measure it.

The teams that successfully close the POC-to-production gap share a common characteristic: they treat the prototype as a discovery instrument, not just a demo artifact. The prototype’s primary output is not the demo it is the learning: which failure modes are most common, what the real query distribution looks like, where the retrieval pipeline struggles, and what the user actually needs from the system. That learning shapes the production design.

The standout performers are not those building general-purpose tools, but those embedding themselves inside workflows, adapting to context, and scaling from narrow but high-value footholds. Sourceallies A narrow, well-scoped system with robust evaluation and governance will consistently outperform an ambitious system with neither.

Fine-Tuning, Agents, and the Complexity Threshold

Two topics deserve specific treatment because they are frequently invoked prematurely.

Fine-tuning is genuinely useful in a narrow set of circumstances: specialized vocabulary the base model doesn’t handle well, a specific output structure that prompt engineering alone can’t reliably produce, or latency requirements that make a smaller fine-tuned model preferable to a large general-purpose one with a complex prompt. Outside of these cases, teams that reach for fine-tuning before exhausting RAG improvements, query rewriting, and prompt refinement are solving the wrong problem at high cost and complexity.

Agentic systems where the model plans, takes actions, uses tools, and coordinates across multiple steps are genuinely powerful but introduce a class of failure modes that retrieval-based systems do not have. Agent loops can repeat. Tool calls can fail silently. A chain of individually plausible decisions can compound into a deeply wrong outcome. Thoughtworks’ experiment building an autonomous software development agent found that even for well-scoped tasks, the workflow would generate features that weren’t requested, make shifting assumptions around gaps in requirements, and declare success even when tests were failing. A human in the loop to supervise generation remains essential. Martin Fowler

Agentic systems require stronger eval coverage, more granular observability, stricter guardrails, and clearer escalation paths. They should be introduced when the workflow genuinely requires dynamic planning or multi-step tool use not because agents feel more sophisticated.

A Practical Checklist Before You Ship

Before moving a GenAI system to production, there are eight questions worth asking honestly:

Have you defined success in production terms and not demo terms, with specific, measurable thresholds tied to business outcomes? Do you have an eval harness that tests the full request pipeline against a representative golden dataset? Are your guardrails operating at the architecture level, not just at the prompt level? Does your retrieval layer enforce the same access controls as your source systems? Can you trace a production response back to the specific retrieved documents and prompt components that produced it? Do you have automated monitoring that detects output quality degradation not just latency and error rates? Is there a human review path for outputs that fall below confidence thresholds or touch sensitive domains? And finally have you defined what will trigger a rollback?

If the answer to any of these is “we’ll add that after launch,” that is exactly where production incidents come from.

The technology is capable. The models are good. The architectural patterns for grounding, evaluating, and governing GenAI systems are increasingly well-understood. What separates the organizations extracting real value from those still stuck in pilot mode is almost never a model choice. It is the engineering discipline applied to everything that wraps the model and the organizational maturity to treat a GenAI system the same way they would treat any other critical piece of software infrastructure. The 5% of organizations achieving measurable production results are not doing something exotic. They are doing the fundamentals well, consistently, before they ship.